Riesz Representation Theorem, Hermitian Operators, Spectral Theorems (Linear Maps, pt 3)

This is the third installment of a condensed summary of linear algebra theory following Axler’s text.

- Part one covers the basics of vector spaces and linear maps, up to Strang’s four fundamental subspaces.

- Part two covers the basics of operators and inner product spaces.

We continue with deeper results regarding inner product spaces and operators on them.

-

(Riesz Representation Theorem.) Suppose $V$ is a finite-dimensional inner product space and $\varphi$ is a linear functional on $V$. Then there is a unique vector $u \in V$ such that

\[\varphi(v) = \inner{v}{u}\]for every $v \in V$.

-

(Motivating example.) Consider the linear functional $\varphi: \P_2(\R) \rightarrow \R$ defined by

\[\varphi(p) = \int_{-1}^1 p(t)(\cos(\pi t)) dt\]where the inner product on $\P_2(\R)$ is multiplication followed by integration on $[-1,1]$. It is not obvious that there exists $u \in \P_2(R)$ such that

\[\varphi(p) = \inner{p}{u}\]for every $p \in \P_2(\R)$ (we cannot take $u(t) = \cos(\pi t)$ because it is not an element of $\P_2(\R)$).

-

(Proof.) First we show that there exists some $u \in V$ such that $\varphi(v) = \inner{v}{u}$ for all $v \in V$. Let $e_1,\dots,e_n$ be an orthonormal basis of $V$. Then we have

\[\begin{align*} \varphi(v) &= \inner{v}{e_1}\varphi(e_1) + \dots + \inner{v}{e_n}\varphi(e_n) \\ &= \inner{v}{\overline{\varphi(e_1)}e_1 + \dots + \overline{\varphi(e_n)}e_n} . \end{align*}\]If we set $u = \overline{\varphi(e_1)}e_1 + \dots + \overline{\varphi(e_n)}e_n$, then we have $\varphi(v) = \inner{v}{u}$ for all $v \in V$.

We show uniqueness by supposing both $u_1$ and $u_2$ satisfy the property above. Then we have $\inner{v}{u_1} - \inner{v}{u_2} = 0.$ This implies that $\inner{v}{u_1 - u_2} = 0$ for all $v \in V$. If we examine $v = 0$, then by definiteness of the inner product we have that $u_1 - u_2 = 0$ or $u_1 = u_2$.

-

Note that the order matters in the inner product $\inner{v}{u}$ because it follows from the fact that $v = \inner{v}{e_1}e_1 + \dots + \inner{v}{e_n}e_n$.

-

-

If $U$ is a subset of $V$, then the orthogonal complement of $U$, denoted $U^\bot$, is the set of all vectors in $V$ that are orthogonal to every vector in $U$:

\[U^\bot = \{v \in V : \inner{v}{u} = 0 \text{ for every } u \in U \}.\] - Basic properties of orthogonal complement. Properties 3 and 4 follow from the definiteness of the inner product.

- If $U$ is a subset of $V$, then $U^\bot$ is a subspace of $V$.

- $\{0\}^\bot = V.$

- $V^\bot = \{0\}.$

- If $U$ is a subset of $V$, then $U \cap U^\bot \subset \{0\}.$

- $U \cap U^\bot = \{0\}$ when $0 \in U$, eg when $U$ is a subspace.

- If $U$ and $W$ are subsets of $V$ and $U \subset W$, then $W^\bot \subset U^\bot.$

-

Suppose $U$ is a finite-dimensional subspace of $V$. Then

\[V = U \oplus U^\bot.\]-

First we show that $V = U + U^\bot$, ie., we show that every $v \in V$ can be expressed as a sum $u + w$ where $u \in U$ and $w \in U^\bot$. Let $e_1,\dots,e_m$ be an orthonormal basis of $U$. We can write

\[v = \underbrace{\inner{v}{e_1}e_1 + \dots + \inner{v}{e_m}e_m}_u + \underbrace{v - \inner{v}{e_1}e_1 - \dots - \inner{v}{e_m}e_m}_w.\]Note that $u,w$ defined as above satisfies $u \in U$ (trivial) and $w \in U^\bot$. (The latter is true because $\inner{w}{e_j} = \inner{v}{e_j} - \inner{v}{e_j} = 0$. By linearity of the second slot, this implies $w$ is orthogonal to every vector in $U$.) Therefore $V = U + U^\bot$.

By Property 4 above, we have that $V = U \oplus U^\bot$.

-

-

Suppose $V$ is finite-dimensional and $U$ is a subspace of $V$. Then

\[\dim U\uperp = \dim V - \dim U.\]- Follows from the decomposition of $V$ into $U + U\uperp$ is a direct sum.

-

Suppose $U$ is a finite-dimensional subspace of $V$. Then

\[U = (U\uperp)\uperp.\]-

First note that $U \subset (U\uperp)\uperp$ because for any $u \in U$, $\inner{u}{v} = 0$ for all $v \in U\uperp$ (by definition of $(U\uperp)\uperp$).

To show that $U \supset (U\uperp)\uperp$, consider any $v \in (U\uperp)\uperp$. Since $v \in V$, we can express it as a sum $v = u + w$, where $u \in U$ and $w \in U\uperp$. This implies $v-u \in U\uperp$ . But since we showed that $U \subset (U\uperp)\uperp$, we have $u \in (U\uperp)\uperp$, so we have $v - u \in (U\uperp)\uperp$. Therefore $ v-u = 0$, ie. $v = u$.

-

- Suppose $U$ is a finite-dimensional subspace of $V$. The orthogonal projection of $V$ onto $U$ is the operator $P_U \in \L(V)$ defined as follows: For $v \in V$, write $v = u + w$, where $u \in U$ and $w \in U\uperp$. Then $P_U v = u$.

- This operator is well-defined because $V = U \oplus U\uperp$.

- The orthogonal decomposition of $u$ into $cv + (u - cv)$ discussed earlier is actually the orthogonal projection of $u$ onto $\span v$.

- (Properties of the orthogonal projection $P_U$.) Suppose $U$ is a finite-dimensional subspace of $V$ and $v \in V$. Then

- $P_U \in \L(V);$

- $P_U u = u$ for every $u \in U;$

- $P_U w = 0$ for every $w \in U\uperp;$

- $\range P_U = U;$

- By definition $\range P_U \subset U$. Also, every $u \in U$ is the output of $P_U u$.

- $\null P_U = U\uperp;$

- $v - P_U v \in U\uperp;$

- From the unique decomposition $v = u + w = P_U v + w$, we have that $v - P_U v = w \in U\uperp$.

- (Idempotence.) $P_U ^2 = P_U;$

- $\norm {P_U v } \leq \norm v $;

- Apply Pythagorean Theorem to $v = P_U v + w$.

-

for every orthonormal basis $e_1,\dots,e_m$ of $U$,

\[P_U v = \inner{v}{e_1}e_1 + \dots + \inner{v}{e_m}e_m.\]- Follows from the proof of the decomposition of $V$ into the direct sum $V = U \oplus U\uperp$.

-

(Minimizing the distance to a subspace.) Suppose $U$ is a finite-dimensional subspace of $V$, $v \in V$, and $u \in U$. Then

\[\norm{v-P_U v } \leq \norm {v - u}.\]-

We have

\[\begin{align} \norm{v-P_U v }^2 &\leq \norm{v-P_U v }^2 + \norm{P_U v - u}^2 \\ &= \norm{v - u}^2\\ \end{align}\]where $(1)$ is obvious and $(2)$ follows from the Pythagorean Theorem.

-

This is just the proof that the length of a side of a right triangle is at most the length of the hypotenuse.

-

Operators on Inner Product Spaces

$V$ and $W$ are finite-dimensional inner product spaces.

-

Suppose $T \in \L(V,W)$. The adjoint of $T$ is the function $T\adj : W \rightarrow V$ such that

\[\inner{Tv}{w} = \inner{v}{T\adj w}\]for every $v \in V$ and every $w \in W$.

-

It may require some convincing that the adjoint is well-defined. For any given $w$, we can define an associated linear functional $\varphi_{T,w}: V \rightarrow \F$ by

\[\varphi_{T,w} (v) = \inner{Tv}{w}.\]By the Riesz Representation Theorem, we know that there exists a unique $u \in V$ such that $\varphi_{T,w} (v) = \inner{v}{u}$. Then we define $T\adj(w)$ such that $T\adj(w) = u$.

-

The mechanical implication is that you can “swap the $T$” from one slot to the other in an inner product (so long as you add a star). Ie, you can freely convert between inner products in $V$ and inner products in $W$, ie, $\inner{Tv}{w} = \inner{v}{T\adj w}$ (and $\inner{w}{Tv} = \inner{T\adj w}{v}$ by conjugate symmetry).

-

- The adjoint is a linear map. (Note that many of the proofs of the adjoint will involve starting with an inner product, “swapping the $T$”, exploiting inner product properties, then “swapping back”, with the result implicated by definiteness of the inner product.

- $T\adj (w_1 + w_2) = T\adj w_1 + T\adj w_2$

-

For any $v \in V$ we have

\[\begin{align*} \inner{v}{T\adj(w_1 + w_2)} &= \inner{Tv}{w_1 + w_2} \\ &= \inner{v}{T\adj w_1} + \inner{v}{T\adj w_2} \\ &= \inner{v}{T\adj(w_1) + T\adj(w_2)} \end{align*}\]which, by definiteness of the inner product, implies $T\adj (w_1 + w_2) = T\adj w_1 + T\adj w_2.$

-

- $T\adj (\lambda w) = \lambda T\adj w$

- $\inner{v}{T\adj (\lambda w)} = \inner{Tv}{\lambda w} = \bar \lambda \inner{Tv}{w} = \bar \lambda \inner{v}{T\adj w} = \inner{v}{\lambda T\adj w}$, which implies the result by definiteness of the inner product.

- $T\adj (w_1 + w_2) = T\adj w_1 + T\adj w_2$

- Properties of the adjoint.

- (Additivity.) Let $S, T \in \L(V,W)$. Then $(S + T)\adj = S\adj + T\adj.$

- \[\begin{align*} \inner{v}{(S + T)\adj w} &= \inner{(S+T)v}{w}\\ &= \inner{Sv}{w} + \inner{Tv}{w} \\ &= \inner{v}{S\adj w} + \inner{v}{T\adj w} \\ &= \inner{v}{S\adj w + T\adj w} \end{align*}\]

- (“Complex homogeneity”.) $(\lambda T)\adj = \bar \lambda T \adj.$

- Let $T \in \L(V,W), S \in \L(W,U),$ and $u \in U$. Then $(ST)\adj = T\adj S \adj$.

- $\inner{STv}{u} = \inner{Tv}{S\adj u} = \inner{v}{T\adj S\adj u}.$

- $(T\adj)\adj = T.$

- $ \inner{w}{(T\adj)\adj v} = \inner{T\adj w}{v} = \inner{w}{Tv},$ where the last step uses conjugate symmetry.

- $I\adj = I.$

- Let $v,u \in V$. Then $\inner{v}{u} = \inner{Iv}{u} = \inner{v}{I\adj u} $.

- (Additivity.) Let $S, T \in \L(V,W)$. Then $(S + T)\adj = S\adj + T\adj.$

- Null space and range of $T\adj$.

- $\null T\adj = (\range T)\uperp$.

- The statement $\inner{Tv}{w} = 0$ for all $v \in V$ implies that $w \in (\range T)\uperp$. The equivalent statement $\inner{v}{T\adj w} = 0$ for all $v \in V$ implies that $w \in \null T \adj$.

- $\null T = (\range T\adj)\uperp$.

- Use $(T\adj)\adj = T$.

- $(\null T\adj)\uperp = \range T.$

- Apply $(U\uperp)\uperp = U$ to $\null T\adj = (\range T)\uperp$.

- $(\null T)\uperp = \range T\adj.$

- Apply $(U\uperp)\uperp = U$ to $\null T = (\range T\adj)\uperp$.

- $\null T\adj = (\range T)\uperp$.

-

Let $T \in \L(V,W)$. Suppose $e_1,\dots,e_n$ is an orthonormal basis of $V$ and $f_1,\dots,f_m$ is an orthonormal basis of $W$. Then

\[\M(T\adj, (f_1,\dots,f_m),(e_1,\dots,e_n))\]is the conjugate transpose of

\[\M(T,(e_1,\dots,e_n),(f_1,\dots,f_m)).\]-

The $k$th column of $\M(T)$ contains the coefficients of $Te_k = \inner{Te_k}{f_1}f_1 + \dots + \inner{Te_k}{f_m}f_m$. The $j$th coefficient is $\inner{Te_k}{f_j}$, which is $\M(T) _ {k,j}$.

Compare with $\M(T\adj) _ {j,k}$. The $j$th column is $T\adj f_j = \inner{T\adj f_j}{e_1}e_1 + \dots + \inner{T\adj f_j}{e_n}e_n$. The $k$th coefficient is $\inner{T\adj f_j}{e_k} = \inner{f_j}{T e_k} = \overline{\inner{T e_k}{f_j}} = \overline{\M(T)_ {k,j}}$.

-

-

Now we switch to focusing on operators on inner product spaces.

-

An operator $T\in \L(V)$ is called self-adjoint (or Hermitian) if $T = T\adj$. In other words, $T \in \L(V)$ is self-adjoint if and only if

\[\inner{Tv}{w} = \inner{v}{Tw}\]for all $v,w \in V$.

-

(Analogy.) Taking the adjoint of an operator is like taking the complex conjugate of a number. Hermitian operators are like real numbers in that $z$ is real if and only if $\bar{z} = z$. We will see further similarities between Hermitian operators and $\R$.

-

Every eigenvalue of a self-adjoint operator is real.

-

Suppose $T$ is a self-adjoint operator on $V$. Let $\lambda$ be an eigenvalue of $T$, and let $v$ be a nonzero vector in $V$ such that $Tv = \lambda v$. Then

\[\lambda \norm{v}^2 = \inner{\lambda v}{v} = \inner{Tv}{v} = \inner{v}{Tv} = \inner{v}{\lambda v} = \bar \lambda \inner{v}{v} = \bar \lambda \inner{v}{v}.\]so $\bar \lambda = \lambda$ and therefore $\lambda$ is real.

-

- Over $\C$, if $\inner{Tv}{v} = 0$ for all $v \in V$, then $T = 0$.

- Note that this is false for vector spaces over $\R$. Counterexample: rotation by $\pi/2$.

-

(Proof.) For any $u, w \in V,$ we can write

\[\begin{align*} \inner{Tu}{w} &= \frac{\inner{T(u+w)}{u+w} - \inner{T(u-w)}{u-w}}{4} \\ &\qquad + \frac{\inner{T(u+wi)}{(u+wi)} - \inner{T(u-wi)}{(u-wi)}}{4}i \\ &= \frac{2\inner{Tu}{w} + 2\inner{Tw}{u}}{4} + \frac{2\inner{Tu}{wi}+2\inner{Twi}{u}}{4}i\\ &= \frac{2\inner{Tu}{w} + 2\inner{Tw}{u}}{4} + \frac{2\inner{Tu}{w} - 2\inner{Tw}{u}}{4},\\ \end{align*}\]where the inner products in the right-hand side of the first line are written in the form $\inner{Tv}{v}$, with the following lines showing the computation. If $\inner{Tv}{v}=0$ for all $v \in V$, then we have $\inner{Tu}{w} = 0$ for any $u,w \in V$. Taking $w = Tu$, we have that $T = 0$.

- (Corollary.) $T$ is self-adjoint if and only if $\inner{Tv}{v} \in \R$ for all $v \in V$.

-

(Proof.) For any operator $T$ and any $v \in V,$ we have

\[\begin{align*} \inner{Tv}{v} - \overline{\inner{Tv}{v}} &= \inner{Tv}{v} - \inner{v}{Tv} \\ &= \inner{Tv}{v} - \inner{T\adj v}{v} \\ &= \inner{(T-T\adj)v}{v}, \end{align*}\]where the left-hand side is $0$ if and only if $\inner{Tv}{v} \in \R$, and $(T-T\adj) = 0$ if and only if $T$ is self-adjoint (i.e. all nonzero $v$ are sent to $0$ under $T-T\adj$ which implies $T-T\adj$ is the $0$ operator).

-

- If $T$ is a self-adjoint operator on $V$ such that $\inner{Tv}{v}=0$ for all $v\in V$, then $T=0$.

- Note that we already proved $\inner{Tv}{v}=0$ for all $v \in V$ implies $T=0$ for operators over $\C$. So, the statement above is saying that over $\R$, $\inner{Tv}{v} = 0$ for all $v \in V$ implies $T=0$ if we know $T$ is self-adjoint.

-

We already proved the claim is true over $\C$ without using self-adjointness. So assume $V$ is over $\R$. Then we have

\[\begin{align*} \inner{Tu}{w} &= \frac{\inner{T(u+w)}{u+w} - \inner{T(u - w)}{u-w}}{4} \\ &= \frac{2\inner{Tu}{w} + 2\inner{Tw}{u}}{4} \\ &= \frac{2\inner{Tu}{w} + 2\inner{w}{Tu}}{4} \\ &= \frac{2\inner{Tu}{w} + 2\overline{\inner{Tu}{w}}}{4} \\ &= \frac{2\inner{Tu}{w} + 2\inner{Tu}{w}}{4}, \\ \end{align*}\]where the inner products in the right-hand side of the first line are written in the form $\inner{Tv}{v}$, with the following lines showing the computation. If $\inner{Tv}{v}=0$ for all $v \in V$, then we have $\inner{Tu}{w} = 0$ for any $u,w \in V$. Taking $w = Tu$, we have that $T = 0$.

-

An operator $T$ is called normal if it commutes with its adjoint. In other words, $T$ is normal if

\[TT\adj = T\adj T.\] - $T$ is normal if and only if $\norm{Tv} = \norm{T\adj v}$ for all $v \in V$.

-

(Proof.) For the second line below, we note that $T\adj T - TT \adj$ is self-adjoint because $(T\adj T - TT \adj)\adj = (T\adj T)\adj - (TT\adj)\adj = T\adj T - TT \adj;$ then we use the fact proved above that $0$ is the only self-adjoint operator that sends vectors to orthogonal ones. We prove the equivalence as follows. We have

\[\begin{align*} T \text{ is normal } &\iff T\adj T - TT \adj = 0 \\ &\iff \inner{(T\adj T - TT \adj) v}{v} = 0\\ &\iff \inner{T\adj Tv}{v} - \inner{TT\adj v}{v} = 0 \\ &\iff \inner{Tv}{Tv} - \inner{T\adj v}{T\adj v} = 0 \\ &\iff \norm{Tv}^2 = \norm{T\adj v}^2 \\ &\iff \norm{Tv} = \norm{T\adj v}. \end{align*}\]

-

-

(For $T$ normal, $T$ and $T\adj$ have the same eigenvectors.) Suppose $T \in \L(V)$ is normal and $v \in V$ is an eigenvector of $T$ with eigenvalue $\lambda$. Then $v$ is also an eigenvector of $T\adj$ with eigenvalue $\bar \lambda$.

-

Since $T$ is normal, $T - \lambda I$ is normal. To verify this we have

\[\begin{align*} (T - \lambda I)(T - \lambda I)\adj &= (T- \lambda I)(T\adj - \bar \lambda I) \\ &= TT\adj - \bar \lambda TI - \lambda IT\adj + \lambda \bar \lambda I \\ &= T\adj T - \lambda I T\adj - \bar \lambda T I + \bar \lambda \lambda I \\ &= (T\adj - \bar \lambda I)(T- \lambda I) \\ &= (T - \lambda I)\adj (T - \lambda I). \end{align*}\]Therefore we can use the norm definition of normal operators to prove the claim as follows. We have

\[0 = \norm{(T - \lambda I)v} = \norm{(T - \lambda I)\adj v} = \norm{(T\adj - \bar \lambda I) v },\]where the right-hand side is equivalent to the statement that $v$ is an eigenvector of $T\adj$ with eigenvalue $\bar \lambda$ .

-

-

(Orthogonal eigenvectors for normal operators.) Suppose $T \in \L(V)$ is normal. Then eigenvectors of $T$ corresponding to distinct eigenvalues are orthogonal.

-

Suppose $\alpha, \beta$ are distinct eigenvalues of $T$, with corresponding eigenvectors $u,v$. We have

\[\begin{align*} (\alpha - \beta) \inner{u}{v} &= \inner{\alpha u}{v} - \inner{\beta u}{v} \\ &= \inner{Tu}{v} - \inner{u}{\bar \beta v} \\ &= \inner{Tu}{v} - \inner{u}{T\adj v} \\ &= 0, \end{align*}\]which implies $\inner{u}{v} = 0$ because $\alpha,\beta$ are distinct.

-

- (Complex Spectral Theorem.) Provides a complete characterization of the normal operators over $\C$.

- (Statement.) Suppose $\F = \C$ and $T \in \L(V)$. Then the following are equivalent:

- $T$ is normal.

- $V$ has an orthonormal basis consisting of eigenvectors of $T$.

- $T$ has a diagonal matrix with respect to some orthonormal basis of $V$.

-

(Proof.) Points (2) and (3) are equivalent because we know $T$ is diagonalizable if and only if it has a basis of eigenvectors.

We show (3) implies (1). If $\M(T)$ is diagonal, then $\M(T\adj)$ will be the conjugate transpose and so will also be diagonal. Since diagonal matrices commute, $T$ is normal.

Now we show (1) implies (3). We know from Schur’s theorem that there is an orthonormal basis $e_i$ with respect to which $\M(T)$ is upper-triangular. We show this $\M(T)$ is actually diagonal. The strategy is to show that each row consists of zeros except possibly the diagonal element, using the fact that $\norm{Tv} = \norm{T\adj v}$. We denote the element of $\M(T)$ in the $j$th row and $k$th column as $a_{j,k}$. We also use the fact that $\M(T\adj)$ is the conjugate transpose of $\M(T)$. For the first row of $\M(T)$, we have:

\[\begin{align*} \norm{Te_1}^2 &= \norm{T\adj e_1}^2 \\ \abs{a_{1,1}}^2 &= \abs{\overline{a_{1,1}}}^2 + \abs{\overline{a_{1,2}}}^2 + \dots + \abs{\overline{a_{1,n}}}^2 \\ &= \abs{a_{1,1}}^2 + \abs{a_{1,2}}^2 + \dots + \abs{a_{1,n}}^2, \end{align*}\]which implies that the first row of $\M(T)$ are all zeros except possibly $a_{1,1}$.

For the second row, we have:

\[\begin{align*} \norm{Te_2}^2 &= \norm{T\adj e_2}^2 \\ \abs{a_{2,2}}^2 &= \abs{a_{2,2}}^2 + \abs{a_{2,3}}^2 + \dots + \abs{a_{2,n}}^2, \end{align*}\]which implies that the second row of $\M(T)$ are all zeros except possibly $a_{2,2}$. Continuing in this fashion, we have that $\M(T)$ is diagonal.

- (Statement.) Suppose $\F = \C$ and $T \in \L(V)$. Then the following are equivalent:

-

To develop the Real Spectral Theorem, we prove some preliminary results.

-

Suppose $b,c \in \R$. Then there is a factorization $x^2 + bx + c = (x-\lambda_1)(x-\lambda_2)$, with $\lambda_1, \lambda_2 \in \R$, if and only if $b^2 \geq 4c$.

-

We can always write $x^2 + bx + c = \left(x+ \frac{b}{2}\right)^2 + \left(c - \frac{b^2}{4}\right)$. (This is called completing the square; we convert the quadratic expression to the sum of a square and a constant.)

If $b^2 < 4c$ (i.e., $c > \frac{b^2}{4}$), then the right-hand side is positive (never zero) and so the quadratic expression cannot possibly have any zeros.

Conversely, if $b^2 \geq 4c$, then $ \frac{b^2}{4} - c \geq 0$ is the square of some real number $d$. So we can rewrite the right-hand side above as the difference of squares:

\[\begin{align*} \left(x + \frac{b}{2}\right)^2 - d^2 = \left(x + \frac{b}{2} + d\right)\left(x + \frac{b}{2} - d\right), \end{align*}\]which proves the result.

-

-

(Polynomials with real coefficients have zeros in pairs.) Suppose $p \in \P(\C)$ is a polynomial with real coefficients. If $\lambda \in \C$ is a zero of $p$, then so is $\bar \lambda$.

-

(Proof.) Let $p(z) = a_0 + a_1 z + \dots + a_m z^m$. Suppose $\lambda \in \C$ is a zero of $p$. Then we have $p(\lambda) = a_0 + a_1 \lambda + \dots + a_m \lambda ^m = 0$. Taking the complex conjugate of both sides, we have

\[a_0 + a_1 \bar \lambda + \dots + a_m \bar \lambda ^m = 0,\]implying that $\bar \lambda$ is a zero.

-

-

(If a polynomial is the zero function, then the coefficients are zero.) If $a_0, a_1, \dots, a_m \in \F,$ then $a_0 + a_1 z + \dots + a_m z^m = 0 $ for all $z \in \F$ implies $a_0 = \dots = a_m = 0$.

-

(Proof by contrapositive.) Reassign $m$ to be the highest index of the coefficients such that $a_m$ is nonzero. We need to find a $z \in \F$ such that $a_0 + a_1 z + \dots + a_m z^m \neq 0$. It will suffice to find a $z$ such that $\abs{a_0 + a_1 z + \dots + a _ {m -1} z^{m - 1} }< \abs{a_m z^m} = \abs{a_m}\abs{z^ {m-1}}\abs{z}$. Using the Triangle Inequality and the multiplicativity of absolute value, we note that

\[\begin{align*} \abs{a_0 + a_1 z + \dots + a _ {m -1} z^{m - 1} } &\leq \abs{a_0} + \abs{a_1 z} + \dots + \abs{a _ {m-1} z^{m-1}} \\ &= \abs{a_0} + \abs{a_1}\abs{ z} + \dots + \abs{a _ {m - 1}}\abs{ z ^ {m - 1}}. \\ \end{align*}\]If we let $z \geq 1$, we are allowed to continue with

\[\begin{align*} \abs{a_0} + \abs{a_1}\abs{ z} + \dots + \abs{a _ {m - 1}}\abs{ z ^ {m - 1}} &\leq \left(\sum_{k=0}^{m-1} \abs{a_k}\right)\abs{z^{m-1}}. \\ \end{align*}\]We are done so long as our choice of $z$ satisfies

\[\left(\sum_{k=0}^{m-1} \abs{a_k}\right)\abs{z^{m-1}} < \abs{a_m}\abs{z^ {m-1}}\abs{z}.\]Since both sides have $\abs{z^{m-1}}$, we can just make $z$ arbitrarily large. Now, keeping in mind our constraint that $z \geq 1$, consider if we let

\[z := \frac{\sum_{k=0}^{m-1} \abs{a_k}}{\abs{a_m}} + 1 = \frac{\sum_{k=0}^{m} \abs{a_k}}{\abs{a_m}}.\]Then we would have

\[\abs{a_m}\abs{z^{m-1}}\abs{z} = \left(\sum_{k=0}^{m} \abs{a_k}\right)\abs{z^{m-1}} >\left(\sum_{k=0}^{m-1} \abs{a_k}\right)\abs{z^{m-1}}.\]This $z$ satisfies our inequality.

-

-

(Factorization of a polynomial over $\R$.) Suppose $p \in \P(\R)$ is a nonconstant polynomial. Then $p$ has a unique factorization (except for the order of the factors) of the form

\[p(x) = c(x - \lambda_1) \cdots (x - \lambda_m )(x^2 + b_1x + c_1) \cdots (x^2 + b_M x + c_M),\]where $c, \lambda_1,\cdots,\lambda_m,b_1,\cdots,b_M,c_1,\cdots,c_M \in \R,$ with $b_j^2 < 4c_j$ for each $j$.

-

(Proof.) We induct on the degree of $p$. Clearly this is true for degree $1$ and $2$ (with the latter case either factoring to two real degree-1 terms or satisfying the quadratic form with $b^2 < 4c$). Now consider some general $p$. If $p$ has only real zeros, then (considering $p$ to be over $\C$) we know $p$ has a satisfactory factorization by the factorization of polynomials over $\C$.

So we need only consider $p$ with complex but nonreal zeros. If we have a zero $\lambda \in \C$ with $\lambda \notin \R$, then we know that $\bar \lambda$ is also a zero. So we can write

\[\begin{align*} p(x) &= (x - \lambda)(x - \bar \lambda)q(x) \\ &= (x^2 - 2\Re (\lambda) x + \abs {\lambda}^2)q(x), \end{align*}\]where $\Re(\lambda)^2 < \abs{\lambda}^2$ because $\abs{\lambda}^2 = \Re(\lambda)^2 + \Im(\lambda)^2$, satisfying the required form of the quadratic.

For the inductive hypothesis to apply to $q$, we have to check that it has real coefficients. Noting that $(x-\lambda)(x-\bar \lambda) \neq 0$ for any $x \in \R$, we can rearrange the displayed expression to get

\[q(x) = \frac{p(x)}{x^2 - 2\Re (\lambda) x + \abs {\lambda}^2},\]which makes it clear that $q(x) \in \R$ for any $x \in \R$. Write $q(x) = a_0 + a_1 x + \dots + a _ {n-2} x^{n-2}$, where $n$ is the degree of $p$. Then we have

\[\Im q(x) = \Im (a_0) + \Im (a_1) x_1 + \dots + \Im (a _ {n-2}) x^{n-2} = 0\]for all $x \in \R$, which implies by a previous result that $\Im(a_k) = 0$ for each $k$. So $q$ has real coefficients, and the induction holds. Because the quadratic factors of any factorization can be factored uniquely over $\C$, the factorization over $\R$ must be unique (otherwise it would violate uniqueness over $\C$).

-

-

If $b,c \in \R$ and $b^2 < 4c$, and $T \in \L(V)$ is self-adjoint, then $T^2 + bT + cI$ is invertible.

-

(Proof.) We need to show that for nonzero $v \in V$, that $(T^2 + bT + cI)v \neq 0$. We can write

\[\begin{align*} \inner{(T^2 + bT + cI)v}{v} &= \inner{T^2 v}{v} + \inner{bTv}{v} + \inner{cv}{v} \\ &= \inner{Tv}{Tv} + b\inner{Tv}{v} + c\norm{v}^2 \\ &\geq \norm{Tv}^2 - \abs{b}\abs{\inner{Tv}{v}} + c\norm{v}^2 \\ &\geq \norm{Tv}^2 - \abs{b}\norm{Tv}\norm{v} + c\norm{v}^2 \\ &= \left(\norm{Tv} - \frac{\abs{b}\norm{v}}{2}\right)^2 + \left(c - \frac{b^2}{ 4}\right) \norm{v}^2 \\ &> 0. \end{align*}\]This implies that $T^2 + bT + cI$ is invertible.

-

-

If $T \in \L(V)$ is self-adjoint, then $T$ has an eigenvalue.

-

(Proof.) We will build a polynomial $p(T)$ whose factorization over $\R$ includes at least one factor of the form $T - \lambda I$. Letting $n := \dim V$, we start with the list of vectors

\[v, Tv, T^2 v, \dots, T^n v\]whose length implies it is a dependent list. Therefore if we write the equation

\[\begin{align*} 0 &= a_0 v + a_1 Tv + a_2 T^2 v + \dots + a_n T^n v \\ &= (a_0 + a_1 T + a_2 T^2 + \dots + a_n T^n) v \\ \end{align*}\]then there is at least one nonzero coefficient $a_k$ such that $k > 0$. Then we continue with the factorization

\[0 = c(T^2 + b_1 T + c_1 I)\cdots(T^2 + b_M T + c_M I)(T-\lambda_1 I ) \cdots (T - \lambda_m I)v\]where $c$ is a nonzero real number, $m + M \geq 1$, $b_j^2 < 4c_j$ for each $j$, and each $\lambda_j \in \R$. However we know that each of the quadratic factors are invertible. This implies that $m > 0$ and that $(T - \lambda_j I)v = 0 $ for at least one $j$. In other words, $T$ has an eigenvalue.

-

-

(Self-adjoint operators and invariant subspaces.) Suppose $T \in \L(V)$ is self-adjoint and $U$ is a subspace of $V$ that is invariant under $T$. Then

- $U\uperp$ is invariant under $T$ (ie, $T| _ {U\uperp}$ is an operator);

- Let $v \in U\uperp$ and $u \in U$. Then $\inner{Tv}{u} = \inner{v}{Tu} = 0$.

- $T| _ U \in \L(U)$ is self-adjoint;

- Obvious.

- $T| _ {U\uperp} \in \L(U\uperp)$ is self-adjoint.

- By $(1)$, we can replace $U$ with $U\uperp$ in $(2)$ to get $(3)$.

- $U\uperp$ is invariant under $T$ (ie, $T| _ {U\uperp}$ is an operator);

- (Real Spectral Theorem.) Provides a complete characterization of the self-adjoint operators over $\R$.

-

(Statement.) Suppose $\F = \R$ and $T \in \L(V)$. Then the following are equivalent:

- $T$ is self-adjoint.

- $V$ has an orthonormal basis consisting of eigenvectors of $T$.

- $T$ has a diagonal matrix with respect to some orthonormal basis of $V$.

- $(2) \implies (3)$: Known from discussion about diagonalizability.

- $(3) \implies (1)$: The conjugate transpose of $\M(T)$ is the same as $\M(T)$.

- $(1) \implies (2)$: We induct on $\dim V$. Because $T$ is self-adjoint, it has an eigenvector, so the claim is true for $\dim V = 1$. Now assume $\dim V = n > 1$ where the claim is true for dimensions less than $n$. Let $u$ be an eigenvector of $T$ normalized to be of unit length, where the existence of $u$ follows from $T$ being self-adjoint. Because $u$ is an eigenvector, $T| _ {\span(u)}$ is an operator; this fact combined with the self-adjointness of $T$ implies $T| _ {\span(u)\uperp}$ is a self-adjoint operator. Therefore, noting that $V = \span(u) \oplus \span(u)\uperp$, $V$ has an orthonormal basis of eigenvectors of $T$ consisting of $u$ adjoined to the orthonormal basis of eigenvectors of $T| _ {\span(u)\uperp}$ (which exists by the inductive hypothesis).

-

- (Complete characterization of self-adjoint operators over $\C$.) A normal operator on a complex inner product space is self-adjoint if and only if all its eigenvalues are real.

- (Proof.) We showed previously that the eigenvalues of a self-adjoint operator are all real. So suppose $T$ is a normal operator on a complex inner product space with only real eigenvalues. Then by the Complex Spectral Theorem, there exists a choice of orthonormal basis such that $\M(T)$ is diagonal. In particular, because the eigenvalues are real, the diagonal entries of $\M(T)$ are real. Because $\M(T\adj)$, which is the conjugate transpose of $\M(T)$, is equal to $\M(T)$, $T$ must be self-adjoint.

-

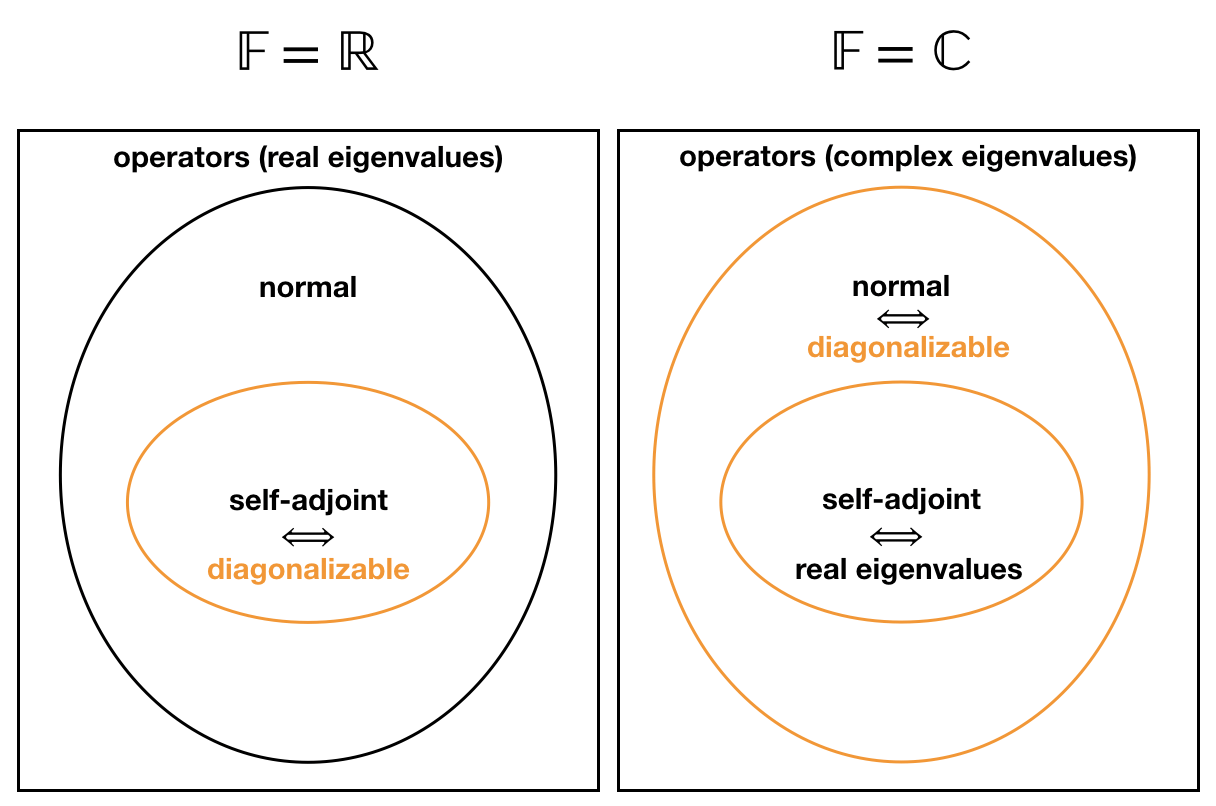

Illustration of the Spectral Theorems.