Operators and Inner Product Spaces (Linear Maps, pt 2)

This is a continuation of a condensed summary of linear algebra theory following Axler’s text. Part one covers the basics, up to Strang’s four fundamental subspaces. We continue with operators and inner product spaces.

Law professor Richard Friedman presenting a case before the U.S. Supreme Court in 20101:

Mr. Friedman: I think that issue is entirely orthogonal to the issue here because the Commonwealth is acknowledging—

Chief Justice Roberts: I'm sorry. Entirely what?

Mr. Friedman: Orthogonal. Right angle. Unrelated. Irrelevant.

Chief Justice Roberts: Oh.

Justice Scalia: What was that adjective? I liked that.

Mr. Friedman: Orthogonal.

Chief Justice Roberts: Orthogonal.

Mr. Friedman: Right, right.

Justice Scalia: Orthogonal, ooh. (Laughter.)

Justice Kennedy: I knew this case presented us a problem. (Laughter.)

Operators and eigenvalues

- Operators are linear maps from $V$ to itself.

- Suppose $T$ is an operator on $V$. A number $\lambda \in \F$ is called an eigenvalue of $T$ if there exists $v \in V$ such that $v \neq 0$ and $Tv = \lambda v.$

- The following are equivalent:

- $\lambda$ is an eigenvalue of $T;$

- $T - \lambda I$ is not {invertible, surjective, injective}

- If $\lambda_1,\dots,\lambda_m$ are distinct eigenvalues of $T$ and $v_1,\dots,v_m$ are corresponding eigenvectors, then $v_1,\dots,v_m$ is linearly independent.

- Assume not. Then let $k$ be the smallest integer such that $v_k = a_1 v_1 + \dots + a _ {k-1} v _ {k-1};$ call this equation $E$. Consider $\lambda_k E - T(E);$ all the $a_i$ must be $0$ because $v_1,…,v _ {k-1}$ are independent and the $\lambda_i$ are distinct. This implies $v_k = 0$ which violates the assumption that it is an eigenvector.

-

So each operator on $V$ has at most $\dim V$ distinct eigenvalues.

- Every operator on a finite-dimensional, nonzero, complex vector space has an eigenvalue.

- Let $V$ be such a vector space of dimension $n > 0$. Consider a nonzero vector $v \in V$. Then the vectors $v, Tv, T^2v, \dots, T^nv$ are linearly dependent. So we can write $0 = a_0v + a_1Tv + \dots + a_nT^nv$, where the coefficients $a_i$ are not all 0 and $a_0$ cannot be the only nonzero coefficient because $v$ is nonzero. Then by the Fundamental Theorem of Algebra we have $0 = c(T - \lambda_1 I)\cdots(T - \lambda_m I)v$. This implies $T-\lambda_i I$ is not injective for some $i$ and so $\lambda_i$ is an eigenvalue.

-

An operator can be represented as a matrix once one basis is specified (unlike general linear maps which require two bases).

-

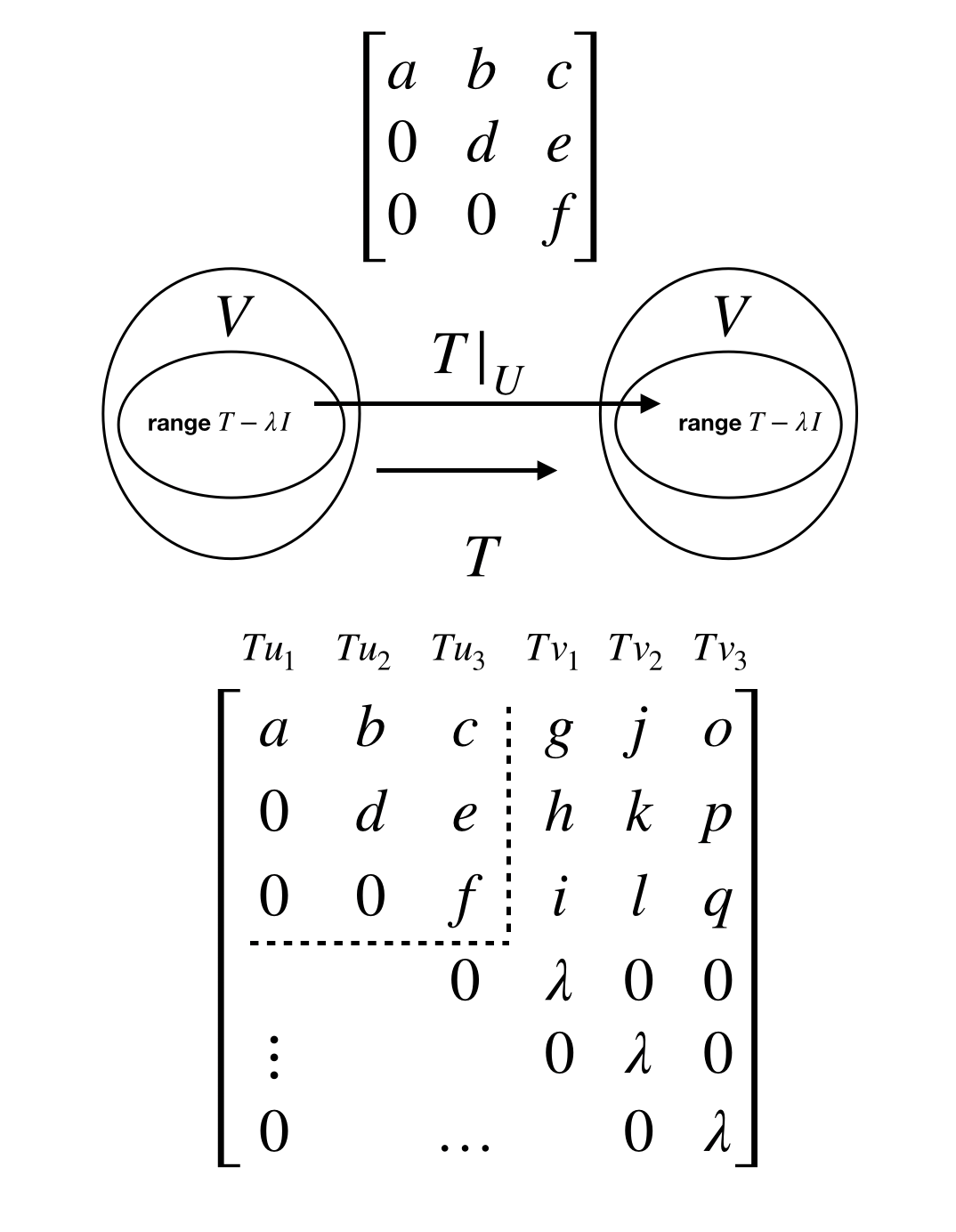

A matrix is called an upper-triangular matrix if every entry below the diagonal is 0.

- Consider a matrix of $T \in L(V)$ with respect to a basis $v_i$. Then the following statements are equivalent:

- $M(T)$ is upper-triangular.

- $T(v_k) \in \span (v_1,…,v _ k)$ for each $k$.

- $\span (v_1,…,v _ k)$ is invariant under $T$ for each $k$.

- Over $\C$, every operator has an upper-triangular matrix (ie, can always find a basis with respect to which the matrix is upper-triangular).

-

Proof by inducting on $\dim V$. Clearly true for $\dim V = 1$. Now suppose true for every operator with dimension less than $\dim V = k$, and consider $T \in L(V)$. We know $T$ has an eigenvalue $\lambda$. Consider $U = \range (T-\lambda I)$. Because $T-\lambda I$ is not surjective, $\dim U < \dim V$. Observe that $U$ is invariant under $T;$ for if we have $u \in U$, we have $(T - \lambda I + \lambda I)u = (T - \lambda I)u + \lambda u$. Therefore $T| _ U$ is an operator on $U$, and by the inductive hypothesis has a basis $u_1,\dots,u_m$ such that $T| _ U u_i \in \span (u_1,\dots,u_i)$ for each $i$.

Now extend this to a basis $u_1,\dots,u_m,v_1,\dots,v_n$, where $m+n = k;$ we now show this is a basis with respect to which $T$ has a upper-triangular matrix. For each basis vector $v_i$, $Tv_i = (T - \lambda I + \lambda I )v_i = (T - \lambda I)v_i + \lambda v_i$, where $(T - \lambda I)v_i \in U$. Therefore $Tv_i \in \span (u_1,\dots,u_m,v_1,\dots,v_i)$.

-

The intuition is the following. Every operator on a complex vector space has an eigenvalue, so we have a subspace $U = \range (T - \lambda I)$ strictly smaller than $V$, over which $T| _ U$ is an operator. We can build $M(T)$ from the upper-triangular $M(T | _ U)$ by exploiting the fact that $Tv_k \in U \oplus \span v_k$.

-

- Suppose $T \in L(V)$ has a upper-triangular matrix with respect to some basis. Then $T$ is invertible if and only if all the entries on the diagonal of that matrix is nonzero.

- $\implies$: Label diagonal entries $\lambda_i$ for $1 \leq i \leq \dim V$. We know $\lambda_1 \neq 0;$ otherwise $Tv_1 = 0$ violating invertibility. By way of contradiction, suppose $\lambda_j = 0$ for $j > 1$. Then $T| _ {\span (v_1,\dots,v_j)} \in L(\span (v_1,\dots,v_j),\span (v_1, \dots, v _ {j-1}))$. This restricted map sends a domain of dimension $j$ to a codomain with dimension $j-1;$ by rank-nullity, there exists a $v \in \span (v_1,\dots,v_j)$ such that $Tv = 0$, violating invertibility of $T$.

- $\impliedby$: We know $v_1 \in \range T$ because $T(v_1/\lambda_1)=v_1$. Suppose $v_j \in \range T$ for all $1 \leq j \leq k$. Then $T(v _ {k+1}/\lambda _ {k + 1}) = a_1 v_1 + \dots + a_k v_k + v _ {k + 1}$. Rearranging to $v _ {k + 1} = \dots$, it is clear that $v _ {k + 1} \in \range T$. Therefore all the basis vectors are in $\range T$ implying $T$ is surjective.

- If $M(T)$ is upper-triangular, then the diagonal entries are precisely the eigenvalues of $T$.

- Consider $M(T-\lambda I)$ for any eigenvalue $\lambda$. The diagonal entries are of the form $\lambda_k - \lambda$. At least one of these must be $0$ because $M(T-\lambda I)$ is singular. So any eigenvalue of $T$ must be on the diagonal. Conversely, any diagonal entry $\lambda_k$ must be an eigenvalue because $M(T-\lambda_k I)$ has a zero entry in the diagonal.

-

The eigenspace of $T$ corresponding to $\lambda \in \F$, denoted $E(\lambda,T)$, is defined by $E(\lambda, T) = \null (T - \lambda I)$.

-

A diagonal matrix is a square matrix that is $0$ everywhere except possibly along the diagonal.

-

An operator $T \in L(V)$ is considered diagonalizable if the operator has a diagonal matrix with respect to some basis of $V$.

- Suppose $V$ is finite-dimensional and $T \in L(V)$. Let $\lambda_1,\dots,\lambda_m$ denote the distinct eigenvalues of $T$. Then the following are equivalent:

- $T$ is diagonalizable;

- $V$ has a basis consisting of eigenvectors of $T;$

- there exist $1$-dimensional subspaces $U_1,\dots,U_n$ of $V$, each invariant under $T$, such that $V = U_1 \oplus \cdots \oplus U_n;$

- $V = E(\lambda_1,T) \oplus \cdots \oplus E(\lambda_m,T);$

- $\dim V = \dim E (\lambda_1,T)+ \cdots + \dim E(\lambda_m, T)$.

- If $T \in L(V)$ has $\dim V $ distinct eigenvalues, then $T$ is diagonalizable.

Review: Properties of complex numbers

Suppose $w,z \in \C$. Then

- sum of $z$ and $\bar z$

- $z + \bar z = 2 \Re z;$

- difference of $z$ and $\bar z$

- $z - \bar z = 2(\Im z)i;$

- product of $z$ and $\bar z$

- $z \bar z = \abs {z}^2;$

- additivity and multiplicativity of complex conjugate

- $\overline{w + z} = \bar w + \bar z$ and $\overline{wz} = \bar w \bar z;$

- conjugate of conjugate

- $\bar {\bar z} = z;$

- real and imaginary parts are bounded by $\abs{z}$

- $\abs{\Re z } \leq \abs{z}$ and $\abs{ \Im z } \leq \abs{z};$

- absolute value of the complex conjugate

- $ \abs{\bar z} = \abs{ z };$

- multiplicativity of absolute value

- $ \abs{wz } = \abs{w}\abs{z};$

-

Follows from multiplicativity of complex conjugate.

\[\begin{align*} \abs{wz}^2 &= wz\overline{wz} \\ &= w\bar w z \bar z\\ &= \abs{w}^2\abs{z}^2. \\ \end{align*}\] - On the complex plane, when multiplying two complex numbers, the angles add and lengths multiply.

- Triangle Inequality

- $\abs{ w + z } \leq \abs{w} + \abs{z}$.

- \[\begin{align*} | w + z |^2 &= (\bar w+ \bar z)(w+z) \\ &= |w|^2 + |z|^2 + \bar w z + \overline{\bar w z} \\ &= |w|^2 + |z|^2 + 2 \Re (\bar w z) \\ &\leq |w|^2 + |z|^2 + 2 |\bar w z| \\ &= |w|^2 + |z|^2 + 2 |w|| z| \\ &= (|w| + |z|)^2. \end{align*}\]

Inner product spaces

-

An inner product on $V$ is a function that takes each ordered pair $(u,v)$ of elements of $V$ to a number $\inner{u}{v} \in \F$ and has the following properties:

- positivity

- $\inner{ v}{v } \geq 0$ for all $v \in V;$

- definiteness

- $\inner{ v}{v } = 0$ if and only if $v =0;$

- additivity in the first slot

- $\inner{ u + v}{ w } = \inner{ u}{w } + \inner{ v}{w };$

- homogeneity in the first slot

- $\inner{ \lambda u}{ v } = \lambda \inner{ u}{v }$ for all $\lambda \in \F$ and all $u,v \in V;$

- conjugate symmetry

- $\inner{ u}{v } = \overline{\inner{ v}{ u }}$ for all $u,v \in V$.

- positivity

- Example: The Euclidean inner product on $\F^n$ is defined by

- $\inner{ (w_1,\dots,w_n)}{(z_1,\dots,z_n)} = w_1 \overline{z_1} + \dots + w_n \overline{z_n}$.

-

An inner product space is a vector space $V$ along with an inner product on $V$.

- Basic properties of inner products

- For each fixed $u \in V$, the function that takes $v$ to $\inner{ v}{ u }$ is a linear map from $V$ to $\F$.

- Follows from additivity and homogeneity in the first slot.

- $\inner{ 0}{ u } = 0$ for every $u \in V$.

- Follows from additivity in first slot.

- $ \inner{ u}{ 0 } = 0$ for every $u \in V$.

- Follows from additivity in second slot (see below).

- $\inner{ u}{ v+w } = \inner{ u}{v} + \inner{ u}{ w }$ for all $u,v,w \in V$.

- \[\begin{align*} \la u,v+w \ra &= \overline{\la v+w, u \ra} \\ &= \overline{\la v,u \ra + \la w, u \ra } \\ &= \overline{\la v,u \ra} + \overline{\la w, u \ra} \\ &= \la u,v \ra + \la u,w \ra. \end{align*}\]

- $\inner {u}{ \lambda v} = \bar{\lambda} \inner{ u}{v }$ for all $\lambda \in \F$ and $u,v \in V$.

- For each fixed $u \in V$, the function that takes $v$ to $\inner{ v}{ u }$ is a linear map from $V$ to $\F$.

- For $v \in V$, the norm of $v$, denoted $\norm{v}$, is defined by

- $\norm v = \sqrt {\inner{v}{v}}.$

- Basic properties of the norm

- $\norm v = 0$ if and only if $v = 0$.

- $\norm{\lambda v} = \abs{\lambda}\norm{v}$ for all $\lambda \in \F$.

- Two vectors $u,v \in V $ are called orthogonal if $\inner{u}{v} = 0$.

- Order doesn’t matter here because $\bar 0 = 0$ and $\inner{u}{v} = 0$ if and only if $\inner{v}{u} = 0$.

- (Pythagorean Theorem, i.e. additivity of squared norms for orthogonal vectors.) Suppose $u$ and $v$ are orthogonal vectors in $V$. Then \(\norm{u + v}^2 = \norm{u}^2 + \norm{v}^2.\)

- \[\begin{align*} \norm{u + v}^2 &= \inner{u+v}{u+v} \\ &= \norm{u}^2 + \norm{v}^2 + \inner{u}{v} + \inner{v}{u} \\ &= \norm{u}^2 + \norm{v}^2 . \end{align*}\]

- Note that the converse is true for real inner product spaces. Namely, the equality holds if and only if $\inner{u}{v} + \inner{v}{u} = 0$. Observe that $\inner{u}{v} + \inner{v}{u} = 2 \Re \inner{u}{v}$, where $\Re \inner{u}{v} = \inner{u}{v}$ for real inner product spaces.

- The converse does not hold in general for complex inner product spaces. The equality holds for non-orthogonal vectors $u,v$ satisfying $\inner{u}{v} = bi, b \neq 0$.

-

Remark. Notice that many results about absolute values and norms are proved by first taking their squares. This is done to convert them to complex conjugates and inner products, respectively, both of which have properties that are easier to exploit.

-

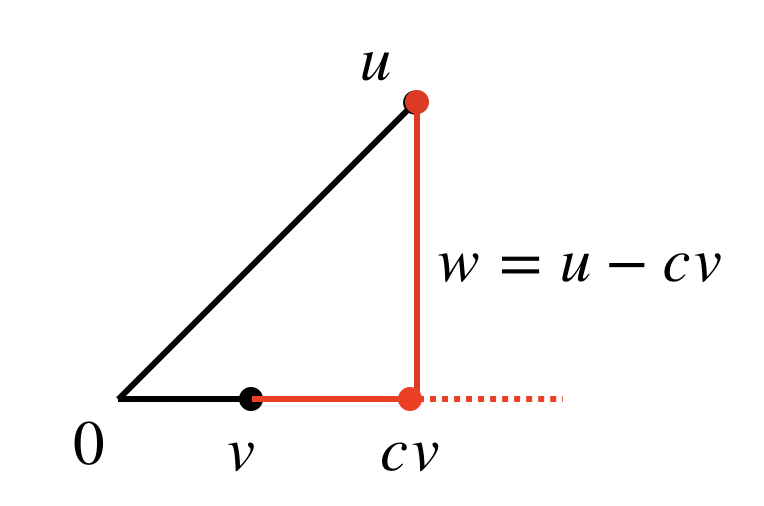

(Orthogonal decomposition.) Suppose $u,v \in V$, with $v \neq 0 $. We can decompose $u$ into $u = cv + (u - cv)$, where $c \in \F$, such that $\inner{cv}{u-cv} = 0$. This is achieved with $c=\frac{\inner{u}{v}}{\norm{v}^2}$; i.e., the orthogonal decomposition is

\[u = \frac{\inner{u}{v}}{\norm{v}^2}v + w,\]where $w = u - \frac{\inner{u}{v}}{\norm{v}^2}v$.

- $\inner{cv}{u - cv} = 0$ if and only if $\inner{u - cv}{v}$, so consider the latter for simplicity. $\inner{u-cv}{v} = \inner{u}{v} - c\norm{v}^2 = 0$ gives $c = \inner{u}{v}/\norm{v}^2.$

- (Cauchy-Schwarz Inequality, i.e. the absolute value of the inner product is bounded above by the product of norms.) Suppose $u,v \in V$. Then

- $\abs{\inner{u}{v}} \leq \norm{u}\norm{v}.$

- This inequality is an equality if and only if one of the $u,v$ is a scalar multiple of the other (i.e., $\norm w = 0$ below).

- Follows from applying the Pythagorean Theorem to the orthogonal decomposition $u = \frac{\inner{u}{v}}{\norm{v}^2} + w$. We have

-

(Triangle Inequality, i.e., a vector’s norm is bounded above by the sum of the norms of its parts.) Suppose $u,v \in V$. Then

\[\norm{u + v} \leq \norm u + \norm v.\]- Inequality becomes equality if and only if one of the $u,v$ is a nonnegative multiple of the other.

- \[\begin{align*} \norm{u+v}^2 &= \inner{u+v}{u+v} \\ &= \norm{u}^2 + \norm{v}^2 + \inner{u}{v} + \overline{\inner{u}{v}} \\ &= \norm{u}^2 + \norm{v}^2 + 2\Re \inner{u}{v} \\ &\leq \norm{u}^2 + \norm{v}^2 + 2\abs{\inner{u}{v}} \\ &\leq \norm{u}^2 + \norm{v}^2 + 2\norm{u}\norm{v} \text{ (by Cauchy-Schwartz)} \\ &= (\norm u + \norm v)^2. \end{align*}\]

-

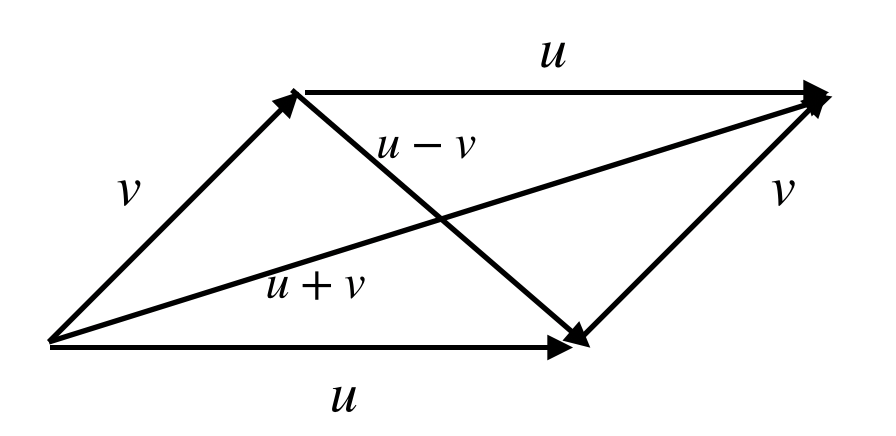

(Parallelogram Equality, i.e. the sum of the squares of a parallelogram’s diagonals equals the sum of the squares of the four sides.) Suppose $u,v \in V$. Then

\[\norm{u + v}^2 + \norm{u - v}^2 = 2(\norm{u}^2 + \norm{v}^2).\]

- \[\begin{align*} \norm{u + v}^2 + \norm{u - v}^2 &= \inner{u+v}{u+v} + \inner{u-v}{u-v} \\ &= \norm{u}^2 + \norm{v}^2 + \inner{u}{v} + \inner{v}{u} + \\ &\qquad \norm{u}^2 + \norm{v}^2 - \inner{u}{v} - \inner{v}{u} \\ &= 2(\norm{u}^2 + \norm{v}^2). \end{align*}\]

-

A list $e_1,\dots,e_m$ of vectors in $V$ is orthonormal if

\[\inner{e_j}{e_k} = \begin{cases} 1 & \text{if } j = k,\\ 0 & \text{if } j \neq k. \end{cases}\] -

(Norm of orthonormal linear combination.) If $e_1,\dots,e_m$ is an orthonormal list of vectors in $V$, then

\[\norm{a_1 e_1 + \dots + a_m e_m}^2 = \abs{a_1}^2 + \dots + \abs{a_m}^2\]for all $a_1,\dots,a_m \in \F$.

- Use Pythagorean theorem repeatedly.

- Every orthonormal list of vectors is linearly independent.

- Take the norm of $a_1 e_1 + \dots + a_m e_m = 0$. We have $\abs{a_1}^2 + \dots + \abs{a_m}^2= 0$, implying $a_i$ are all $0$.

-

(Importance of orthonormal bases.) Suppose $e_1,\dots,e_n$ is an orthonormal basis of $V$ and $v \in V$. Then

\[v = \inner{v}{e_1}e_1 + \dots + \inner{v}{e_m}e_m\]and

\(\norm{v}^2 = \abs{\inner{v}{e_1}}^2 + \dots + \abs{\inner{v}{e_m}}^2.\)

- I.e., the utility of the orthonormal basis is that the components of an arbitrary $v \in V$ can easily be expressed as inner products because $\inner{e_j}{e_k}=0$ for $j \neq k$ and $\norm{e_j}=1$. Then orthogonality enables the use of the Pythagorean theorem, allowing the norm to be similarly expressed.

-

(Gram-Schmidt Procedure converts linearly independent lists to orthonormal lists). Suppose $v_1, \dots, v_m$ is a linearly independent list of vectors in $V$. Let $e_1 = v_1/\norm{v_1}$. For $j=2,\dots,m$, define $e_j$ inductively by

\[e_j = \frac{v_j - \inner{v_j}{e_1}e_1 - \dots - \inner{v_j}{e_{j-1}}e_{j-1}}{\norm{v_j - \inner{v_j}{e_1}e_1 - \dots - \inner{v_j}{e_{j-1}}e_{j-1}}}.\]Then $e_1,\dots,e_m$ is an orthonomal list of vectors in $V$ such that

\[\span(v_1,\dots,v_j) = \span(e_1,\dots,e_j)\]for $j=1,\dots,m$.

-

First note that $\norm{e_1} = \frac{1}{\norm v_1} \norm{v_1} = 1$ (see “Basic properties of the norm”). Moreover $\span(e_1) = \span(v_1)$ because $e_1$ and $v_1$ are multiples of each other. Now assume for some $1 < j < m$ that $e_1,\dots,e _ {j-1}$ are orthonormal and $\span(e_1,\dots,e _ {j-1}) = \span(v_1,\dots,e _ {j-1})$. Then we know $e_j$ is well-defined (i.e., denominator is non-zero because $v_j \notin \span(e_1,\dots,e _ {j-1})$) and clearly equals $1$. Let $1 \leq k < j$. Then we have

\[\begin{align*} \inner{e_j}{e_k} &= \inner{\frac{v_j - \inner{v_j}{e_1}e_1 - \dots - \inner{v_j}{e_k}e_k - \dots - \inner{v_j}{e_{j-1}}e_{j-1}}{\norm{v_j - \inner{v_j}{e_1}e_1 - \dots - \inner{v_j}{e_{j-1}}e_{j-1}}}}{e_k} \\ &= \frac{\inner{v_j}{e_k} - \inner{v_j}{e_k}}{\norm{v_j - \inner{v_j}{e_1}e_1 - \dots - \inner{v_j}{e_{j-1}}e_{j-1}}} \\ &= 0. \end{align*}\]Therefore $e_1,\dots,e_j$ are orthonormal. We note that $v_j \in \span(e_1,\dots,e _j)$ from inspecting the definition of $e_j$; in conjunction with the hypothesis that $\span(e_1,\dots,e _ {j-1}) = \span(v_1,\dots,v _ {j-1})$, this implies that $\span(v_1,\dots,v_j) \subseteq \span(e_1,\dots,e_j)$. But since $v_1,\dots,v_j$ can be expanded to a basis of $\span(e_1,\dots,e_j)$, and since $v_1,\dots,v_j$ and $e_1,\dots,e_j$ have the same length, $\span(v_1,\dots,v_j) = \span(e_1,\dots,e_j)$.

-

- Every finite-dimensional inner product space has an orthonormal basis.

- It has a basis, which can be converted to an orthonormal basis via Gram-Schmidt.

- Every orthonormal list of a finite-dimensional vector space can be extended to an orthonormal basis.

- Extend the orthonormal list $e_1,\dots,e_m$ to a basis $e_1,\dots,e_m,f_1,\dots,f_n$. Applying Gram-Schmidt leaves the $e_j$s unchanged.

- (Schur’s Theorem.) Suppose V is a finite-dimensional complex vector space and $T \in L(V)$. Then $T$ has an upper-triangular matrix with respect to some orthonormal basis of $V$.

- We know there is some basis $v_1,\dots,v_n$ of $V$ with respect to which $T$ has an upper-triangular matrix. This means $\span (v_1,\dots,v_j)$ is invariant under $T$ for each $j$. Apply Gram-Schmidt to $v_1,\dots,v_n$ to get the orthonormal basis $e_1,\dots,e_n$. We showed by induction that $\span(v_1,\dots,v_j) = \span(e_1,\dots,e_j)$, and so $ \span(e_1,\dots,e_j)$ is invariant under $T$. This is equivalent to stating that the matrix with respect to the orthonormal basis is upper-triangular.

Continued in Part Three.

-

Reprinted from Axler’s text. ↩